Kubernetes is the de facto standard and fast-growing container management platform that enables a multitude of new, scalable, and resilient cloud native applications to thrive.

These container-based applications typically reside on a limited number of worker nodes that comprise a Kubernetes cluster. These clusters typically have one or three management nodes, depending on availability requirements.

Above that, you ideally have an orchestration service that orchestrates and lifecycles these guest clusters to standardize and automate. In vSphere with Tanzu these are called supervisor clusters that are built as the bridge between classical virtualization and the new cloud native realm. There is a lot to manage in the overall.

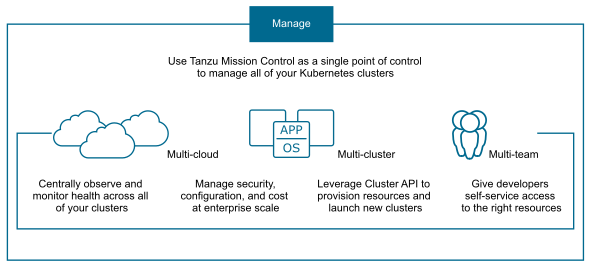

So, now your enterprise has a growing footprint of diverse Kubernetes clusters, it is becoming difficult to retain visibility into all these clusters. You need to make sure everything respects the rules and scales with demand. A clear understanding of what is happening is also important.

Especially if you have different Kubernetes orchestrators in use both on- and off-prem. So, i.e., vSphere with Tanzu, AKS (Azure Kubernetes Services) and EKS (Elastic Kubernetes Services (AWS)).

Tanzu Mission Control (TMC) addresses that issue and lets you connect and manage various Kubernetes clusters from a single pane of glass. However, until June 2023, it was only feasible to obtain TMC as a subscription in the form of Software-as-a-Service. That means, you have to connect your on-prem infrastructures through the internet. Due to its bidirectional secure mechanism, it posed no security threat; however, in the event of a dark site or high compliance requirements, it could have been a significant hindrance.

A Brief Odyssey into Tanzu Mission Control Self Managed

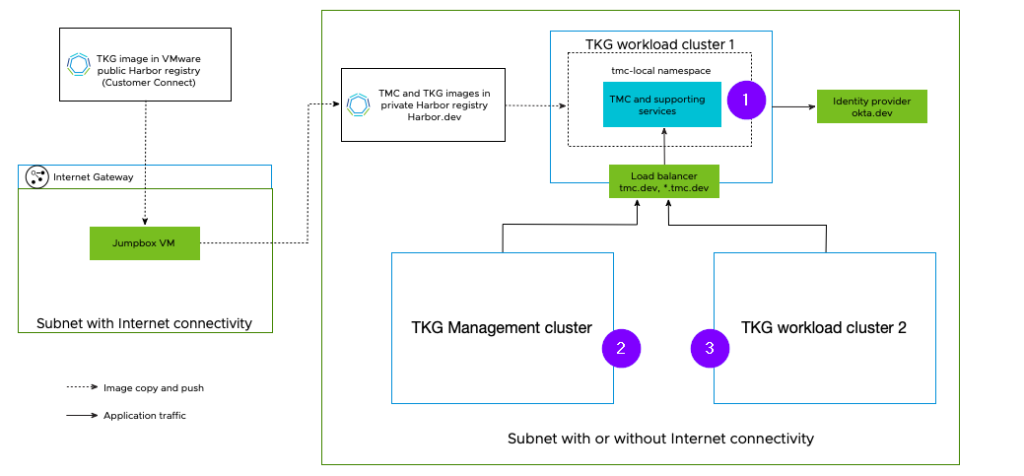

In comparison to the SaaS version, you obviously have to install the self-managed version yourself. The TMC application itself runs in a local instance in your TKG workload cluster (1).

After successful installation and integration of a supported identity provider, you can either attach / manage TKG management cluster (2) or workload cluster (3).

Supporting services like a prepared harbor container registry and service type load balancing is required. These you usually set up anyway during initial TKG configuration.

In addition, you need to establish a trust to the harbor by adding the CA certificate.

To utilize TMC for backing up workload clusters, you need a S3 storage as a target for the Velero backup functionality. Please be informed that TMC has an internal MinIO instance that is solely utilized for the purpose of audit reports and scan results.

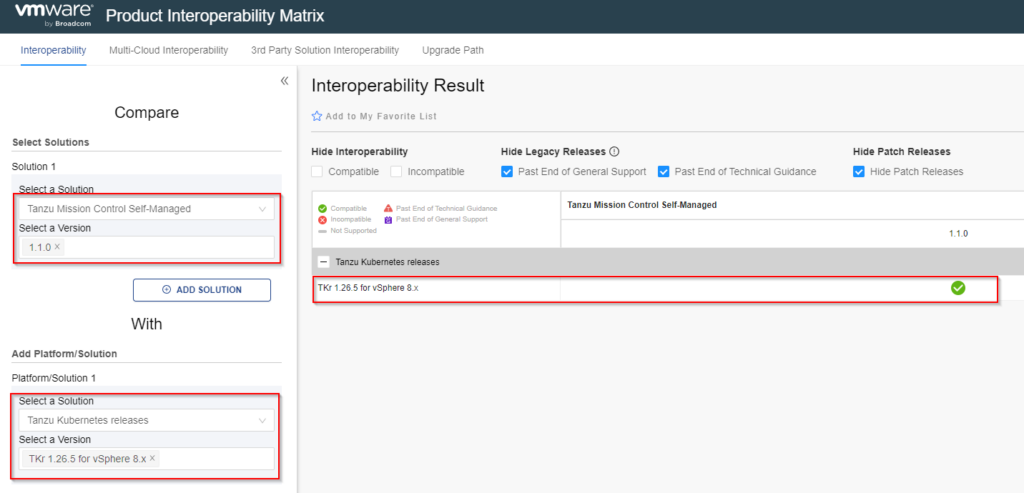

TKG cluster running in vSphere and TKG workload cluster running in vSphere 7.x & 8.x. are supported. Please always check the interoperability matrix to ensure that the required versions are supported.

Limits and Possibilities: Understanding the Realms of TMC Self Managed

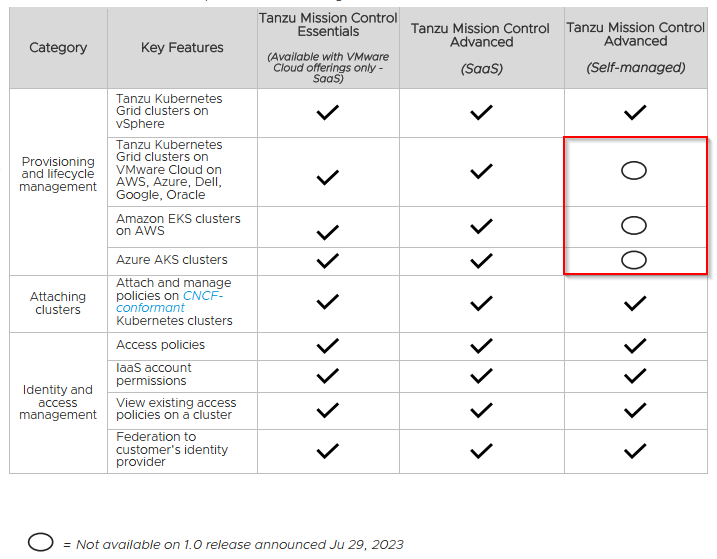

Greater control means fewer features, so TMC self-managed is primarily used for on-premise, compliance-focused or dark-site K8s installations that require centralized management.

Key facts are, you require the advanced licensing of TMC to use self-managed. Furthermore, there are limitations to managing and provisioning of TKG clusters on public clouds and AWS/EKS clusters.

There are further limitations and changed behaviors, please look at the TMC comparison chart & the using TMC sm part for limitations (links below).

Furthermore, I can recommend a hands-on lab to get a first impression yourself:

https://customerconnect.vmware.com/en/evalcenter?p=tanzu-mc-sim-hol-gen-21

Tanzu Mission Control Self Managed — Useful Links

TMC Comparison Chart:

https://tanzu.vmware.com/content/tanzu-mission-control/tmc-comparison-chart

Limitations / Changed Behavior:

https://docs.vmware.com/en/VMware-Tanzu-Mission-Control/1.1/tanzumc-sm-install/using-tmc-sm.html

TMC self-managed documentation:

https://docs.vmware.com/en/VMware-Tanzu-Mission-Control/1.1/tanzumc-sm-install/index-sm-install.html

Interoperability Matrix:

https://interopmatrix.vmware.com/Interoperability?col=1771,17901&row=820,17283